Digital Art and AI: the speed of light and everything else

Artificial Intelligence (AI) has been one of the many buzzwords in recent years and is increasingly prevalent in every facet of our society, including the cultural and creative sector. While the boundaries of AI models remain unknown to us, it is already clear that AI is here to stay and it is therefore of utmost importance that those in the sector work towards being creative in responding to and using AI and technology.

In a series of 2 articles, Dr Bridget Tracy Tan takes us through what is emerging on the cultural horizon for artists and cultural professionals in the area of AI. In the first article, we explore what creating art in the digital era entails. In the face of expanding technological presence, what are the role of artists and cultural professionals in transforming artistic and creative practices?

“As with the cubists, we are asking for a new way of looking at things, but more totally, since we are more impatient and more anxious to go to the basic images. This explains the impact of Happenings, event pieces, mixed media films. We do not ask any more to speak magnificently of taking arms against a sea of troubles, we want to see it done. The art which most directly does this is the one which allows this immediacy, with a minimum of distractions.”

Defining the digital art genre: the advent of CRT (cathode ray tube) monitors and video in art

What constitutes the digital in art, can be loosely defined as a practice that employs technology to accumulate data expressed as numbers into a visualisation process. Because this process allows almost any kind of ‘data’ to be logged, mixed and expressed, the possibilities in the digital universe are boundless and infinite. An early example of the ‘digital’ art genre is video art. With the invention of the Sony Portapak in 1965 (a portable video camera), authorship of ‘collecting’ audio visual data and re-presenting it directly and intact, became a single seamless and convenient process.

In 1971, Peter Campus produced a work he named “Double Vision” where he fed two video camera recordings through a mixer.2 This resulted in an intriguing visual output that could arguably be seen as the earliest innovative layering of data visualisation complete with respective soundtracks, mimicking live experience as an audio-visual presentation.

How artificial intelligence has unfolded in contemporary visual experiences

AI, or artificial intelligence, is a programmable set of algorithms or mathematical codes, that present as instructions on how to manage data running through the programme. This process can extend from machine learning (straightforward management of data output) to deep learning (more complex management of data output).

80% of the information we process in our brain comes from sight. A further 10% comes from sound. It follows that the reality we create in our brain comes largely from what we see.3 AI is a processing tool very much like other technological tools, such as extended reality (XR), virtual reality (VR), augmented reality (AR), holography, naked eye 3D to name but a handful. These tools can work together with other material fabrications (such as Barrisol or parallax barriers4) that refract the processing of data, in the creation and presentation of digital art products.

Digital art renderings are directly related to the use of data transmission in code format, through electrical pulses or light pulses for example. Because we can break down any data into such codes, we are able to switch and reorder those codes, and transmit them through electrical current or through light, to generate a different data output that our senses of sight and sound apprehend.

AI in image making

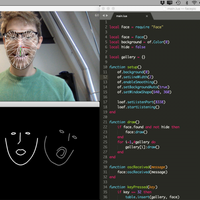

In 2021, the artist Keane Tan won an art award for his artwork titled “A Dramatic Cinematic for Our Century”, using oil on canvas to create his image. The artist disclosed that the methodology behind the image creation involved the use of AI and machine learning technology. AI image generators are not new. Examples of machine learning generators include generative adversarial network (GAN)5 and variational autoencoder (VAE).6

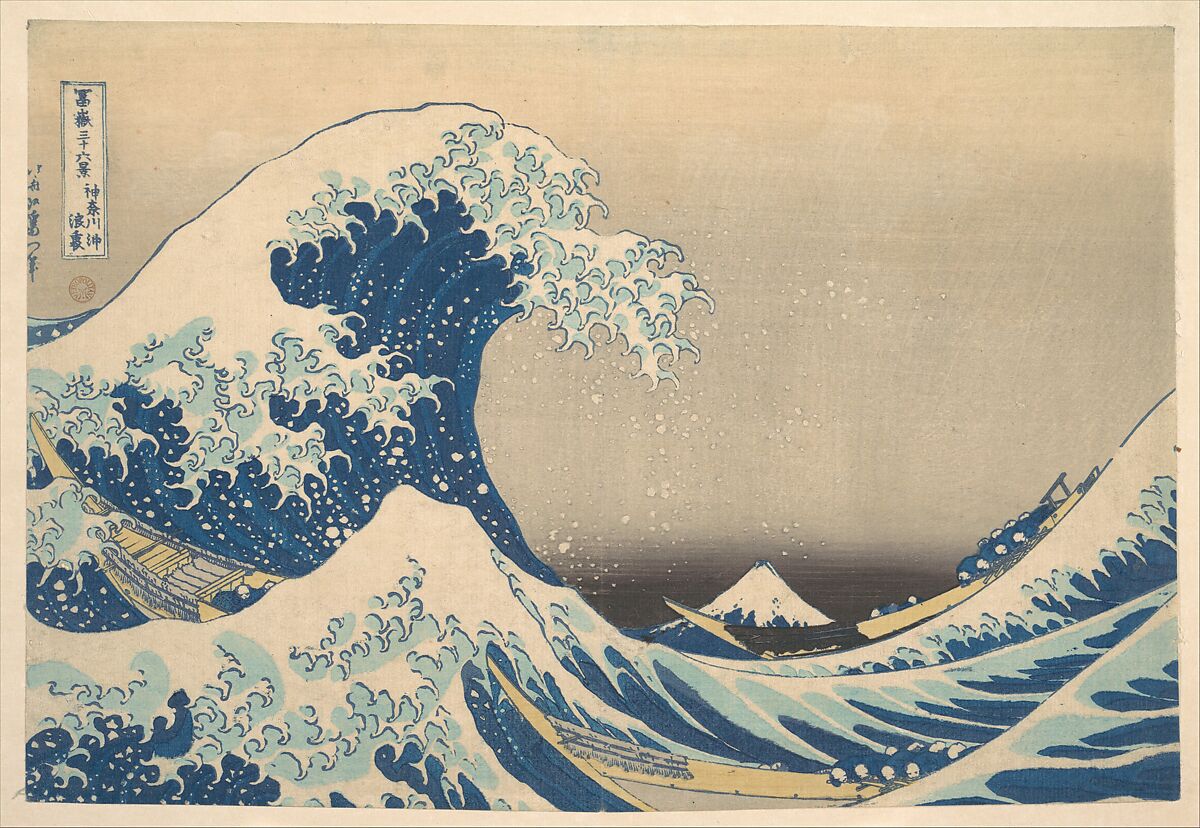

Despite the inscrutable names, in machine learning contexts, this kind of AI generator is simply fed data sets, which may involve a series of words, descriptions, original images for example. The generator then produces an image that is the result of compositing all the data sets fed. In the case of Keane’s work, there is an obvious reference to Edo era printmaker, Hokusai’s “Kanagawa-oki Nami Ura” or “Great Wave of Kanagawa”.

1. Keane Tan, A Dramatic Cinematic for Our Century, 2021, Oil on Canvas © UOB Painting of the Year 2021

1. Keane Tan, A Dramatic Cinematic for Our Century, 2021, Oil on Canvas © UOB Painting of the Year 2021

2. Katsushika Hokusai, Kanagawa-oki Nami Ura, c. 1830-1832, nishiki-e, H. O. Havemeyer Collection, Bequest of Mrs. H. O. Havemeyer, 1929, Metropolitan Museum of Art © Metropolitan Museum of Art

It is likely other sets of data were fed to the generator, for example, the words ‘dramatic’ or ‘cinematic’ which appear in the title of the work. Further interventions in machine learning can refine aspects of how the algorithm processes data. The algorithm can also shift by recognising patterns and association: for example, to use the word ‘shipwreck’ beside ‘cinematic’; ‘dystopian’ beside ‘drama’ or ‘cinema.7

Like the portapak, AI and digital art creation can be described as ingesting and digesting preexisting data to ‘playback’ reconfigured paradigms. Employing AI in artistic processes enables opportunistic, creative outcomes at exponential speeds. These are sometimes radical departures from what is traditional, what is conventional.8 In Keane Tan’s example, utilising machine learning for the process demonstrated how new configurations were a creator’s gambit, resulting in a serendipitous collaboration with AI. The digital rendering successfully morphed references from history, nature, modern life, popular culture, colours, thoughts across the spectrum of the visual universe at an accelerated pace, creating a transcendent ‘superimage’.

The use of technology to breathe new life in art and art's history

3. The animation based on the historic Chinese painting Along the River During the Qingming Festival in Expo 2010, Shanghai. © AndyHe829, Wikimedia Commons

A decade before Keane Tan’s win, the 2010 Shanghai Expo with the theme: “Better City – Better Life” showcased what they termed a ‘digital tapestry’ recreated from the original panoramic painting, ‘Qingming shanghe tu’ (Along the River During the Qingming Festival). The company Crystal CG produced a 6.3m tall animated, digital mural that projected across 130m.9 New elements consistent with the old iconography including people, trees, animals, houses scaled up the original scene into a dynamic and larger composition. The display enabled the scenes to appear as both daytime and nightfall. To create such an environment, modeling and coding are interwoven in a cross-platform engine such as Unity10, reinterpreting the original, static painting on silk into animation.

Features include allowing the characters and vessels to move in the scene, and for the background to transition from day to night. Algorithms control how data generates the scenes visualised such that the viewer becomes immersed in the landscape of the work. AI for example, may be employed to autocomplete coding that suggests the way an animation is driven based on predictable realities, such as direction of wind, waves or footsteps of an animal, and degrees of light and dark as time passes.11

The world of digital art including forms of AI is one that is as vast and as it is precise. This world mirrors the ‘intermedia’ that characterises human experience at its most immediate, measurable, and visceral. AI in technology leverages patterns of human thought and human experience, rapidly upcycling an otherwise passive and boundless archive of big data. Random and systematic interventions by both humans and machines calibrate precision-driven dimensions and interpretations of the world around us, challenging our perspectives, enlarging our experiences. Across visual and performing arts today, the digital paradigm offers tremendous untapped potential to comprehensively re-imagine histories, the future and everything in between.

Cover Image: The animation based on the historic Chinese painting Along the River During the Qingming Festival in Expo 2010, Shanghai. © AndyHe829, Wikimedia Commons

Read the second article of the series by Dr Bridget Tracy Tan on the challenges artmakers face in the era of AI here.

References

1. https://www.nbaldrich.com/media/pdfs/collaborative-reader3.pdf

2. https://www.vdb.org/titles/double-vision

3. Man, D. and Olchawa, R. (2018) ‘Brain Biophysics: Perception, consciousness, creativity. Brain Computer Interface (BCI)’, Biomedical Engineering and Neuroscience, pp. 38–44. doi:10.1007/978-3-319-75025-5_5

4. Barrisol is actually a comprehensive product solution that involves the use of a patented PVC with flammable/heat classification appropriate for use in lighting and displays. A parallax barrier is an apparatus with strategic, precision slits that can be used to obstruct image and light emissions to control how the viewer absorbs such. A common use for this is to allow a viewer to experience 3D, without special equipment, simply by creating a false depth, controlling what and how the eye apprehends in layers and at angles. Stereoscopic imaging is an example of the use of parallax barriers.

5. https://machinelearningmastery.com/what-are-generative-adversarial-networks-gans/

6. https://paperswithcode.com/method/vae

7. The author is not privy to the exact inputs Keane Tan used in deploying machine learning during the creation of his artwork. These are suggestions based on the image and the title given.

8. The probability of generating identical images from the input of the same data remains unpredictable. There is a concept in AI where memorization occurs, and identical images are generated. Even in this circumstance, elements resulting from the transfer of coded data such as pixel distribution and image ‘noise’ will invariably, even minutely, differ.

9. The original painting was by Zhang Zeduan (AD 1085 and 1145) The work was a handscroll of ink on silk, measuring 5.28m long and only about a foot high. It captured the daily life of people during Northern Song (960-1127 AD) in what was then the capital, Bianjing. (modern day Kaifeng in Henan Province).

10. Unity is an engine originally developed for gaming design in an immersive environment but has evolved to be used in different applications across genres and platforms.

11. An example of data driven machine learning and processing is mathematical modelling employed during the recent pandemic, to predict possible scenarios compositing the existing and current data. This technology is thus able to plot and anticipate future outcomes based on real time observations, calculations, and probabilistic reasoning.

About the Author

Dr Bridget Tracy Tan is Director for the Institute of Southeast Asian Arts and Art Galleries at Nanyang Academy of Fine Arts. Formerly a curator at the Singapore Art Museum (now National Gallery Singapore), she holds a First Class Honours degree in History of Art. Her PhD in practice-led research as a curator and critical art historian was obtained from the University of the Arts London. The thesis critically explored Southeast Asian museology and Southeast Asian curating in contemporary paradigms that extend into global platforms, specifically biennales. Dr Tan continues to assemble exhibitions and facilitate the teaching of Southeast Asian arts. She has contributed essays and articles on local and regional artists in seminal publications. Over the last two decades, she has also judged regional and international competitions for photography and painting.

Similar content

from - to

25 Oct 2018 - 27 Oct 2018

deadline

01 Sep 2017

deadline

30 Apr 2019

deadline

07 May 2023